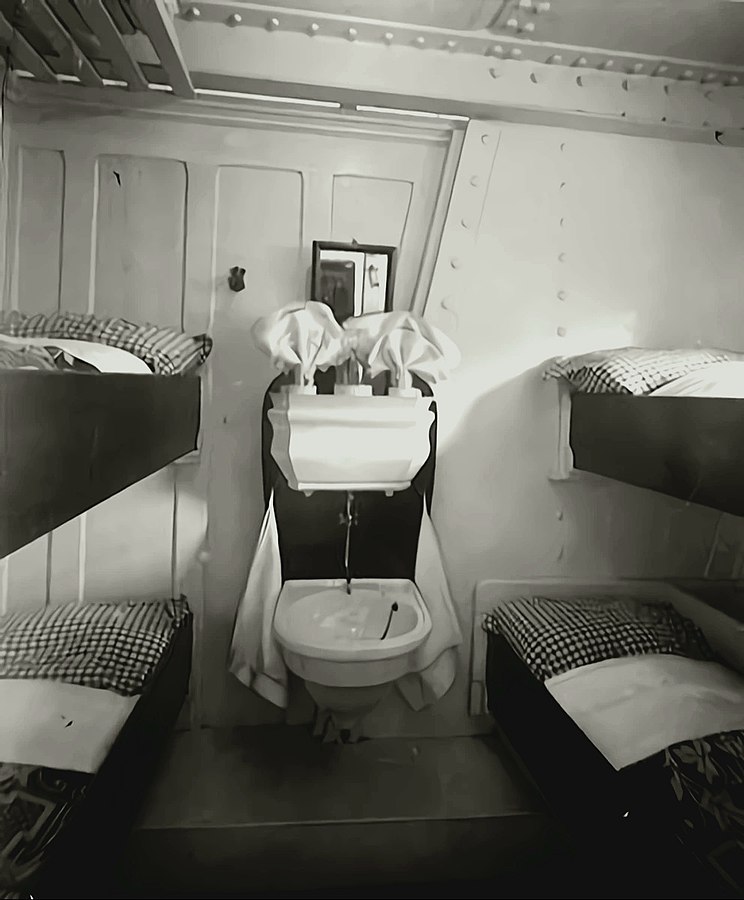

The black slope for all road trip novices. Photo by Raul Taciu.

If the mountain won’t come to the prophet, then the prophet must go to the mountain.

If the mountain is very far away, the prophet is well advised to rather ride by horse, Uber or even take a plane. I was lucky to get the chance to use the car of the family. Unfortunately, this car was not in Brussels, but in North Rhine-Westphalia in Germany. Though, a car is much smaller and more mobile than a mountain, it was again me who had to go get the car.

I avoid cars whenever possible. I fly more often than I drive a car. So when I got to Germany for the hand-over of the car, we drive first together to the gas station, so I can verify that I can still manage to refill the tank, which I have not done for years.

The estimated total driving time to the Alps amounts to 8 hours. Considering that my longest drive was 4 hours from Berlin to the Baltic Sea, I decide to split the ride in two and stay halfway overnight. On my way to the Alps I make two stops in Germany for grocery shopping. Fritz-Kola, Bionade, different kinds of nuts, Klöße, Spätzle and few other specialities that are either very expensive in France or not available at all. In the end, little space is left in the car.

On the Road

I am on the Autobahn. I listen to the album A Night At The Opera from Queen. I listen to the album twice. When I reached again the last song God Save The Queen, still much Autobahn is ahead of me. I have to concentrate at every motorway junction. In between, at 120 km/h, time seems to halt. Nothing happens. FLASH. Yikes! Apparently, I did not slow down fast enough and got caught by a speed camera. This would be the first time in my life I get an administrative fine.

The next minutes I observe the real-time fuel consumption per 100 km. I heard once car engines would be designed to be most fuel-efficient at an engine-specific speed. Without the non-conservative force friction, I would only need fuel for the initial acceleration and to climb the mountains. In the plane, my consumption should be close to 0. Hence, the majority of the consumption is required to balance friction to keep the speed. During my studies, we learned that friction f(v) is a function of the speed v and includes significant higher order contributions in v. That means,

f(v)=αv+βv2+O(v3).

Consequently, the fuel consumption is optimal for a speed v=0. :thinking: I keep on driving.

I listen to the German audio book Ich bin dann mal weg (english: I’m Off Then) on Spotify. Hape Kerkling reads his report from his pilgrim journey on the Camino de Santiago. I am also somehow on a trip to find rest. I wonder if I should also walk the Camino. Eventually, I arrive at my planned stop in a youth hostel in the south of Germany.

The youth hostel is quite busy. It accommodates 200 high school students from an international school in Germany, a seminar group of enthusiasts of frequency therapy led by their guru living in Canada and touring once every year in Europe, and a group of professional bicycle sport trainers. I get along best with the sports trainers and we start discussing social justice. With the question how a different salary of public servants in hospitals and schools can be justified, I go to bed.

On the Road Again

Next morning, I prepare myself for entering Switzerland. The use of their motorways requires a car vignette that can be bought at the border. I leave the hostel.

Proud of me, I refill the car at the gas station all alone. Then, I pass through the city to get on the motorway to Switzerland. FLASH. :unamused: Most likely, I got again trapped by a speed camera. The forest of street signs 30 km/h in the evening changed to ordinary 30 km/h signs while the street with 3 lanes per direction resembles a motor road. I feel cheated.

Without any further issues, I reach the border between Germany and Switzerland and buy a car vignette. The sales person is very friendly and attaches the new vignette next to the collection of old vignettes with the oldest dating back to 2012. These vignettes give proof of my father’s travels—like backpack travellers would put stickers of their discovered countries on their backpack.

My itinerary to the French Alps brings me to few Swiss cities I do not know yet. I decide to leave the motorway and have a walk downtown in Basel. Not 5 minutes later, the police knocks on my window while I wait at an intersection. We greet each other friendly. Then, they ask me to park close by for an inspection. This is the first time in my life that I am subject to police scrutiny as a car driver. They let me know that expired vignettes must be removed in Switzerland. The penalty is 265€. Fortunately, the police officers are in a good mood and propose that I remove the vignettes as soon as possible and do not pay the penalty. I am easily convinced of this plan and accept without further ado. Then, I discover in Basel its difficulties to find a parking spot in the city centre. Eventually, I can have a sort walk. All in all, I do not like Basel so much.

I continue my journey to the French Alps and leave again the highway to the city centre of the Swiss de facto capital Bern. Given my experience in Basel, I decide to give up finding a free parking spot and take the central parking house right next to the old town. The sun shines. Bern was founded in the Middle Age and the centre still reflects this charm.

People of Bern enjoying a warm Sunday afternoon in early March.

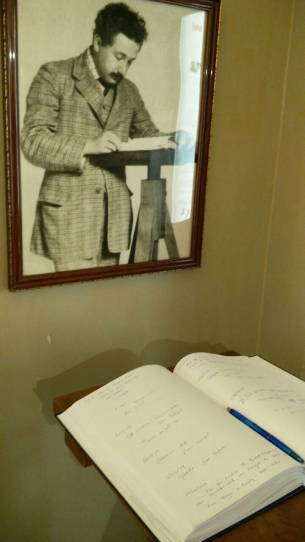

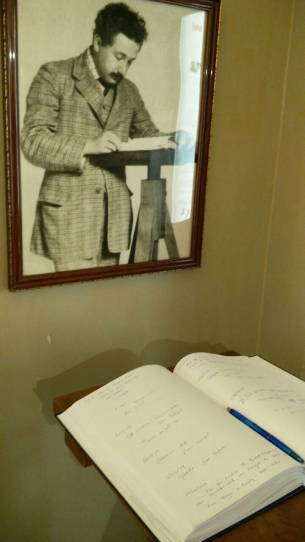

The living room of Einstein in Bern.

The guest book of the Einstein Museum Bern.

It is full of personal data.

While strawling to the old city, I discover an Einstein museum installed in his former flat. In the museum I learn that Einstein’ first and only daughter has been lost. I wonder if this daughter is still alive and knows her famous parents. On the way out, I notice the guest book of the museum, in which people—many apparently greedy for significance after the recent impressions from Einstein’s live–let other people know of their visit. For many, this is just a guest book. For me, it is a filing system containing personal data subject to data protection laws. As I need to arrive in the Alps before sunset, I decide to not inform the only present staff member of this recent discovery and just leave.

Eventually, I leave Bern and head towards Martigny and then Chamonix. In between, the motorway becomes a thin black slope that winds upwards the mountains in sharp curves. The temperature drops under the freezing point. Fortunately, the road is dry and clean. I continue with caution—much to the regret of those presumably local drivers that queue with little distance behind me. I can only relax again once I found enough space on the side to let them pass.

On the last kilometres before my destination, I pick up a hitch-hiker finishing the service as snow rescue patrol. I get some tips on the best skiing spots before our paths divide again. A quarter hour later, I arrive in my valley. I consider twice next time if I should not rather take the train.

]]>